Artificial Intelligence: Global Equity Beneficiaries

AI/ML Basket & Trend Analysis

Executive Summary

Objective

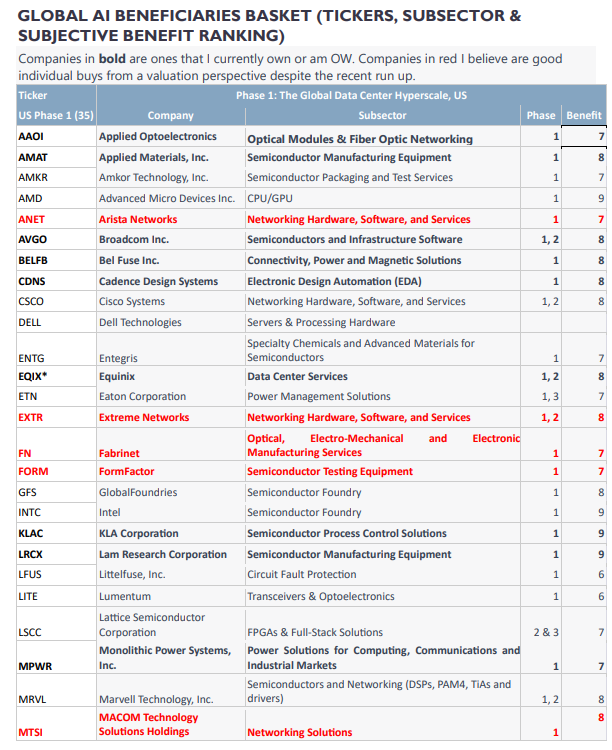

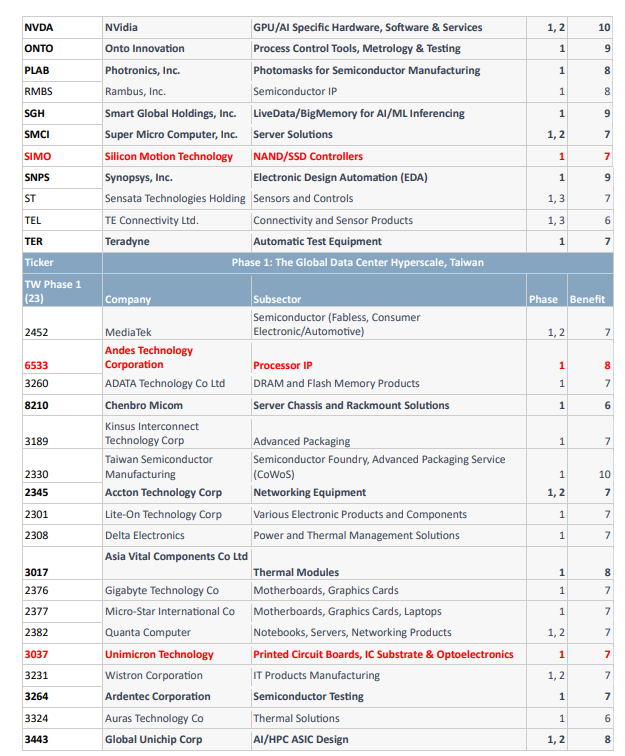

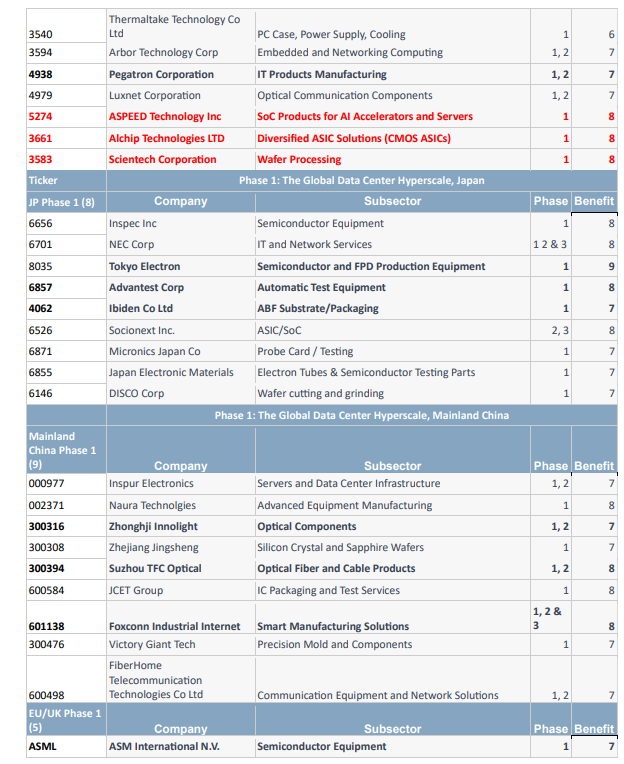

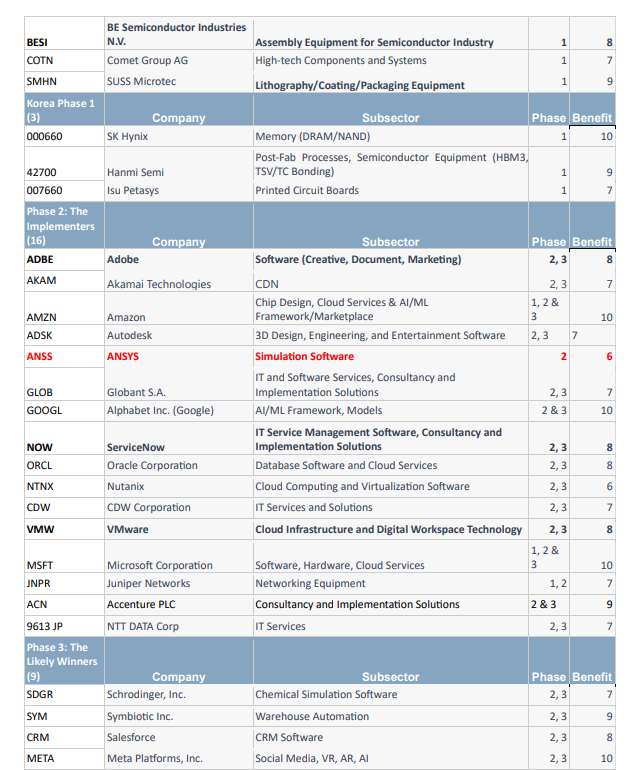

Create a basket of international equities to generate selection alpha and outperform both the broader equity market, and other AI focused baskets, focused on capturing the AI/ML & accelerated computing paradigm shift utilizing a valuation sensitive selection process and a triphasic framework for the technological paradigm of artificial intelligence, accelerated computing and robotics/automation.

Reasoning

Existing baskets focus on predicting the winners and view data centers as simply a means to an end, while comments from the most informed among AI pioneers show – data centers will be at the heart of any AI boom, they are the only certainty to what AI/ML will look like in the future, they are the “picks and shovels” of the paradigm shift. Additionally, due to potential macro risks, they have retained relatively low multiples on fears of overearning. This provides a unique opportunity, although the window to take advantage of the rerating is rapidly closing.

Methodology

Due to the recent and significant outperformance of large cap technology, the forward expected returns for some of the most obvious beneficiaries are lower despite their potential to accrue gains associated with artificial intelligence being only incrementally more in the most extreme cases (such as Nvidia). Therefore, utilizing a framework of the most likely path and contributors for the progression of the AI theme and a model for data center capex spending as the theme ramps, we form a universe of global equities and pare it back for redundancy, supply chains, percent of potential AI/ML/deep learning associated revenue and other advantages/disadvantages and overweight those companies presenting a margin of safety or poised to significantly outperform. I will list the companies I am currently overweight in my own portfolios and will follow up on my positioning as it relates to developments for these companies.

I am putting this behind a paywall, I’m planning on doing 2-3 in depth trend analysis articles per year w/ international equity baskets and one year ahead piece (see my “23 trades for 2023” thread, the cosmetics trend analysis or Q3 2022 posts on SVB etc. for a feel of whether or not you’ll be interested). Posts about financial history etc. will still be free.

Please click here for the below article in PDF format (much better formatting, much easier to read if on mobile or tablet).

Click here for the basket in excel format, sorted by phase (valuation ratios only, for viewing).

Click here for the basket as a list, with no formatting, with 50+ metrics to screen by and more fundamentals for each company (if you’d like to find individual stocks to buy out of the basket, use this).

Triphasic Model for Forecasting AI Beneficiaries

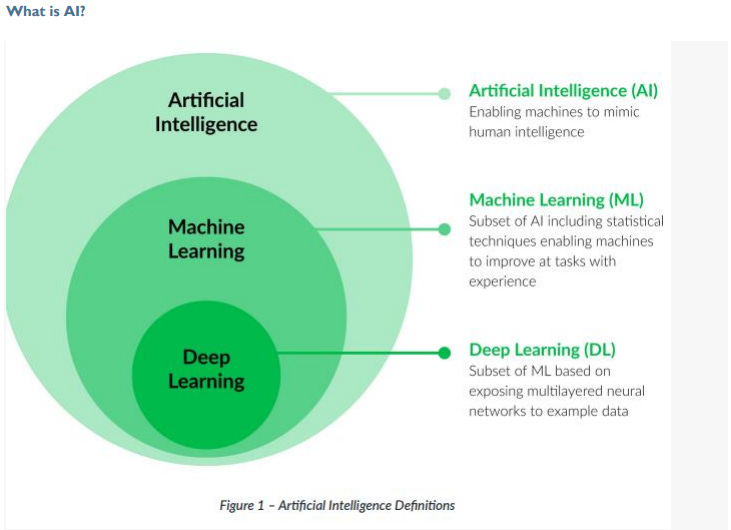

AI, deep learning, accelerated computing are all not “new”. But they’ve undergone what Malcolm Gladwell calls the “tipping point”. Visitors and google searches for chatGPT are growing exponentially, with numbers that rival the internet or the iPhone.

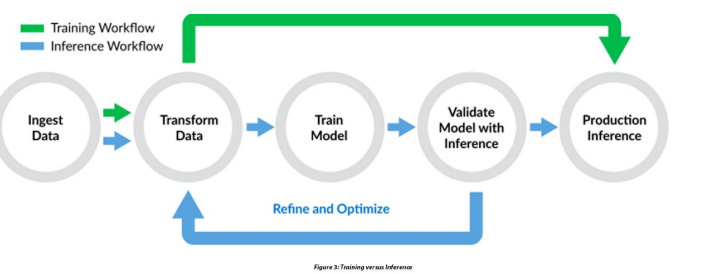

My framework for viewing AI/ML uses three phases, the further away from phase 1 the more uncertain the winners, meaning the higher the risk in owning them (but, potentially, also the higher the reward). These phases will not occur in a linear fashion, they’re all happening together, simultaneously. But, the outcomes of phase 2 and 3 are much harder to predict than phase 1, so, in this framework, I overweight the beneficiaries of the first phase.

The first phase involves the proliferation and growth of artificial intelligence (AI) and machine learning (ML) from corporate clients and is centered around the development and build-out of robust computational infrastructure. This involves a massive and sustained tailwind to suppliers of data center equipment of accelerated computing required for both AI training and inference. The second phase involves those services which will enable the democratization of Artificial Intelligence. Software, applications, consumer and business facing implementations will all need to be built - the winners here, again, will be the companies that provide the tools necessary to build on top of. The rail cars are not worth nearly as much as the railroad. Let’s call these solutions the infrastructure of AI’s implementation (while the first phase is more the infrastructure of AI’s existence & propagation).

The third phase is when the winners emerge. Which use case for AI or Machine Learning has the greatest market potential, which implementation ends up revolutionizing the way we live.

Clearly, just from looking at the past we can see that when it comes to the path of technology it is difficult to predict once you attempt to go too far out. Additionally, macro concerns such as slowing consumer demand continue to affect the global economy and we do not yet know how cloud providers and other software companies will be affected by increased AI capex spending. Thus, I believe there is a more significant risk/reward proposal in focusing on “data centers”, the “picks and shovels” (I said this 12 months before the economist did) will benefit regardless.

While retaining maximum leverage to tailwinds from AI/ML training and inference and minimizing the impact from potential downturns in consumer spending and cloud spending by companies, we will create a basket of stocks purpose built to have the best risk reward proposition possible in this paradigm.

Phase 1: The Global Data Center Hyperscaling

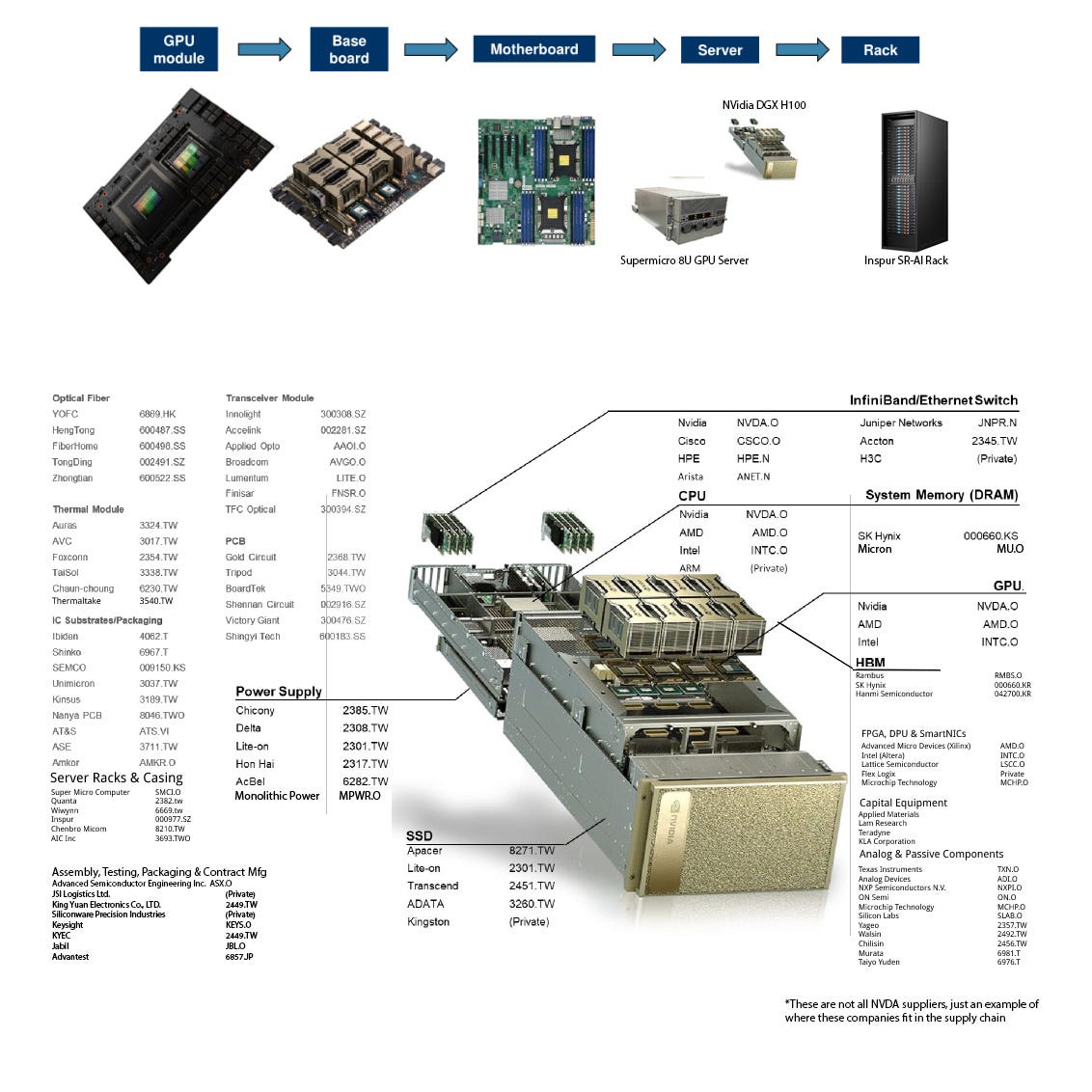

These data centers have and will continue to become the backbone of machine learning computations, using servers packed with powerful GPUs, and later specialized accelerators like Tensor Processing Units (TPUs), FPGAs and ASICs designed specifically for machine learning workloads, Technologies for distributed computing will mature, enabling the combination of processing power across a network of machines to work on large-scale machine learning tasks and incentivizing portfolios significantly focused on advanced networking.

In addition to hardware, the first phase also involved significant development of the software stack for machine learning, including the creation and refinement of machine learning platforms and frameworks like TensorFlow, PyTorch, and Keras in order to make it easier for engineers and researchers to develop and implement machine learning models, abstracting away many of the complexities of the underlying computations and hardware. Ultimately, phase 1 is the bottleneck for phase 2 and 3. Without robust computing potential, everything else is limited.

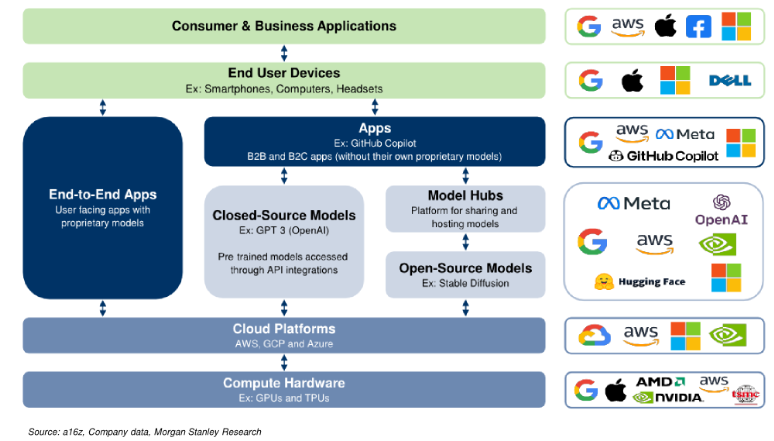

*The above picture includes some companies which are not included in the current NVDA supply chain. However, it provides a good indication of what fits where.

Phase 1I: The Democratization of AI/ML

The second phase involves the democratization of AI and ML. With the infrastructure of phase I, the technology is refined and developed by major players and upstarts alike. Novel applications are rolled out to consumers and businesses, with some failing and others succeeding to become indispensable to the way we go about our lives. The developers making these tools and capabilities will reach a tipping point in which the median consumer finds them to be both accessible and intuitive – this has the potential to begin an increase in productivity akin to the one seen in the late 1990s. Major tech companies continue offering increasingly broad cloud-based AI services, providing affordable access to powerful computational resources without the need to buildout new data center capability. This will significantly lower the barrier to entry for startups and individual developers. This phase may run in parallel with the first phase, but the first phase will remain the bottleneck until the buildout is sufficiently scaled.

Simultaneously, there will be a surge in open-source software and pre-trained models, allowing developers to easily fine-tune models for specific tasks without starting from scratch. Resources for learning about AI and ML will proliferate through business and consumer alike, including online courses and tutorials. Daily Active User growth will see a sustained period of exponential increase.

Phase 3: Integration and Specialization

The third phase is marked by the deeper integration of AI and ML into various industries and aspects of society, as well as further specialization within the field of AI itself. The technologies described as “artificial intelligence” may not resemble the LLMs we currently use, and one or more services will provide “agents” capable of carrying out tasks without being prompted directly (an “AI assistant”).

AI and ML technologies will have been integrated into everything from healthcare, where they're used for medical imaging and disease prediction, to finance, where they're used for fraud detection and financial forecasting, to transportation, where they're used for self-driving cars and route optimization.

Simultaneously, the field of AI will see increased specialization. Some researchers and companies focus on specific subsets of AI, such as natural language processing, computer vision, or reinforcement learning. Others focus on specific applications or industries. This specialization allows for greater expertise and more targeted advancements.

The rapid advancements in accelerated computing, edge AI and explainable AI are also notable aspects of this phase, promising to further enhance the capabilities and extend the reach of AI technologies. Future directions include continual learning, AI alignment, and the development of common sense reasoning in AI systems.

Phase 1: Data Center Hyperscaling Beneficiaries

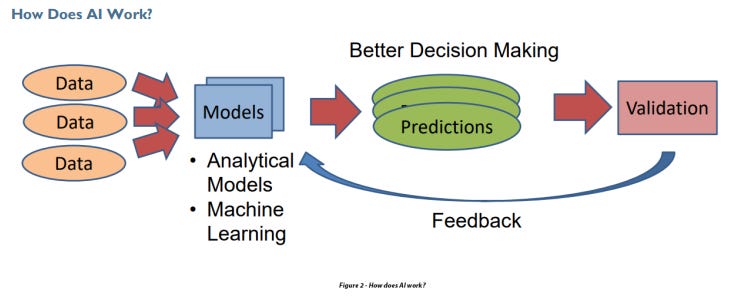

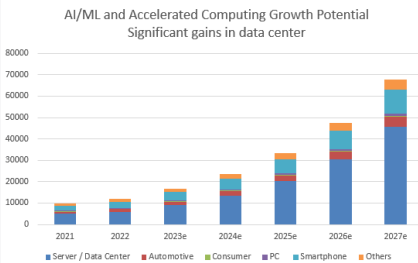

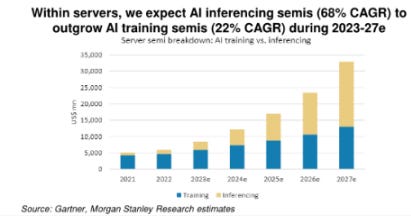

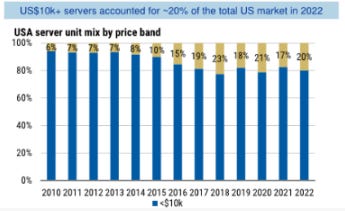

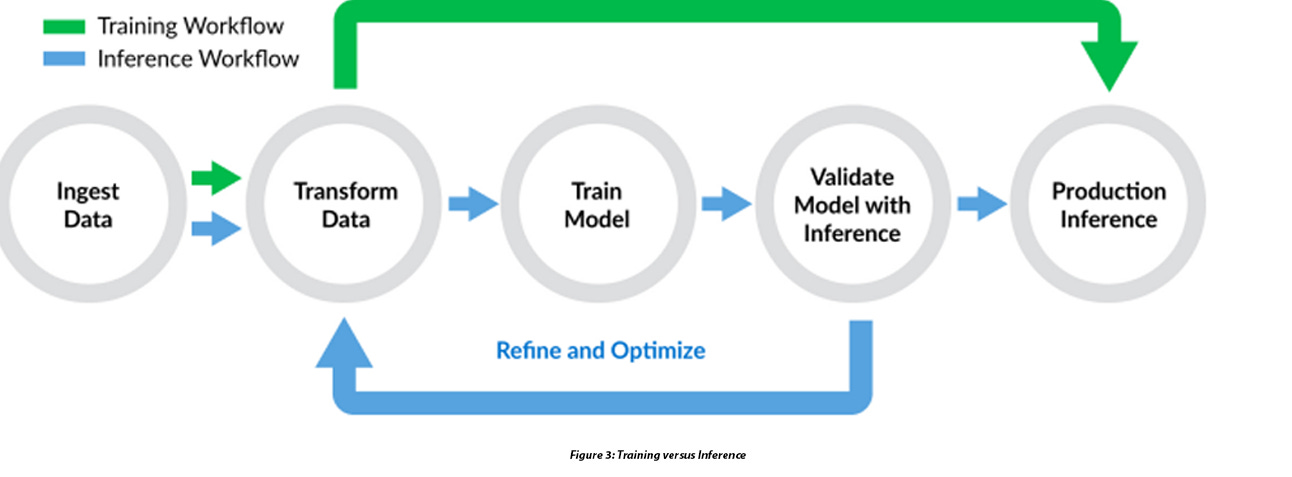

The first phase of the proliferation and growth of artificial intelligence (AI) and machine learning (ML) can be characterized as the foundational period where the necessary hardware infrastructure is established. This phase primarily revolves around the build-out of data centers capable of the accelerated computing required for training and inference in AI and ML applications. Data Center capabilities will need to scale rapidly to meet demand for training, but mostly for inference. The most significant opportunities in the next 16-24 months will be to take advantage of the retooling in data center capability. Breaking down the data center, from inside the chip to the interconnections between them to the equipment that ensures their safety and efficiency, we can find the primary beneficiaries of this phase. Companies with exposure to higher end data center customers, who’s net spend intentions will be spurred on by the advance of AI, will feature prominently.

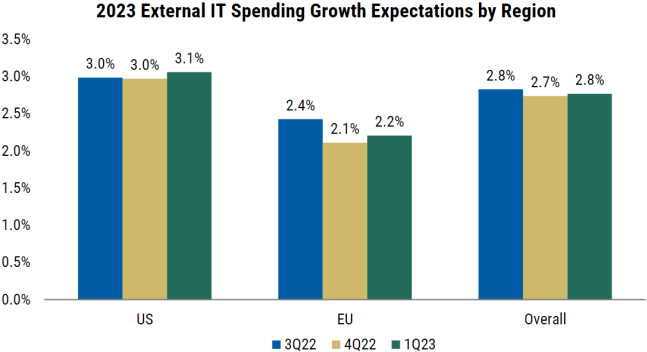

Recent surveys of CIOs shows a moderate increase in expectations of external IT spending, however the nature of openAI means that a significant share of data center demand may come from outside the C-Suite. Developers already have concerns over downtime and availability. A significant amount of data center demand in the next decade may come from companies who don’t currently exist, or at least don’t currently exist in a state where they are responding to Morgan Stanley’s surveys.

Source: Morgan Stanley Research

The enormous computing power required for AI/ML places considerable demands on a variety of technology sectors. The companies involved in providing the necessary infrastructure stand to benefit significantly from this heightened demand. This includes businesses involved in semiconductor manufacturing, networking equipment, data center construction and management, and more. The required infrastructure is indeed extensive and multifaceted, ranging from chip manufacturing and connectivity technologies to human-computer interface solutions. Let's discuss some specific areas and associated companies.

Semiconductor Manufacturing: This involves creating the physical chips that are the brains of the AI/ML operations. This includes companies that manufacture the raw materials for chips, the companies that design and build the chips themselves, and those that provide the equipment necessary for chip manufacturing.

Companies like Taiwan Semiconductor Manufacturing Company (TSMC) and GlobalFoundries (GFS), which are involved in semiconductor foundry services, stand to benefit significantly.

Chip manufacturing equipment providers such as ASML, Tokyo Electron, Applied Materials, Disco Corp, KLAC and Advantest also stand to benefit as the demand for high-quality, AI-capable chips increases.

EDA and Semiconductor IP: Electronic design automation (EDA) and semiconductor intellectual property (IP) companies, like Cadence Design Systems and Synopsys, play a crucial role in designing and verifying the chips and systems used in AI/ML. The key roles of EDA/IP are:

Semiconductor Design and Production: EDA tools are critical in designing and producing semiconductors, the fundamental components of the computing infrastructure that powers AI and ML. These tools help in designing, simulating, and verifying complex circuit designs before they're fabricated. They streamline the process of creating advanced processors, GPUs, and other specialized hardware needed for machine learning tasks.

Hardware Acceleration: Machine learning tasks, particularly deep learning, are computation-heavy and can benefit significantly from hardware acceleration. The custom-designed ASICs, TPUs, and other accelerators that speed up these tasks require sophisticated EDA tools for their design and production.

IP Cores: Semiconductor IP cores are pre-designed modules that serve specific functions within a chip. They allow for quicker and more efficient chip design by enabling designers to incorporate standard functionalities without designing from scratch. This accelerates the development of customized chips that can handle specific AI and ML tasks.

Design for Manufacturability (DFM): The complexities of chip design and the scale of modern data center infrastructure mean that manufacturing yield is a key concern. EDA tools help design chips that not only meet performance and power requirements but are also optimized for successful manufacturing.

Enable Innovation: By streamlining the chip design process and reducing time-to-market, EDA tools and semiconductor IP allow researchers and companies to experiment with new hardware architectures and technologies. This fosters innovation and can lead to new, more effective ways of performing AI and ML computations.

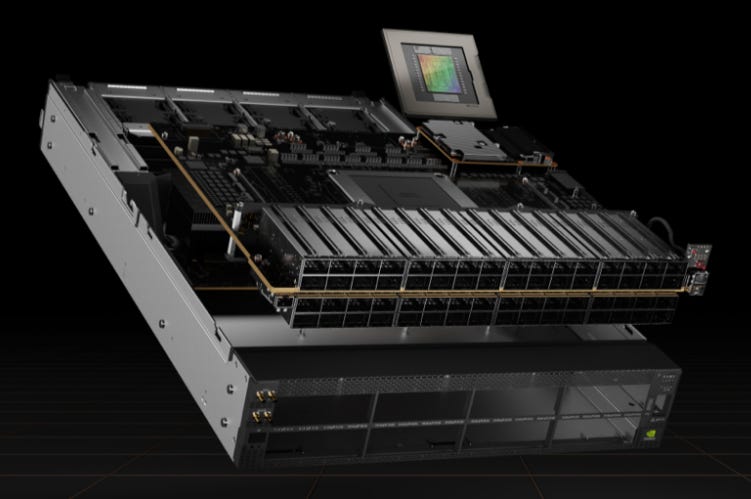

Graphics Processing Units From AI and data analytics to high-performance computing (HPC), data centers are key to solving some of the most important challenges. End-to-end NVIDIA accelerated computing platforms, integrated across hardware and software, gives enterprises the blueprint to a robust, secure infrastructure that supports develop-to-deploy implementations across all modern workloads. Most advanced CPUs run at high speeds but are not capable of the extreme level of parallel execution required (a factor limited by the number of “threads”). A GPU has thousands of threads along which parallel execution can occur, calculating millions of floating point operations per second (FLOPS). Grace Hopper includes a Transformer Engine that is a custom Hopper Tensor Core technology and dramatically accelerates the AI calculations for Transformers. At each layer of a Transformer model, the Transformer Engine analyzes the statistics of the output values produced by the Tensor Core. With knowledge about which type of neural network layer comes next and what precision it requires, the Transformer Engine also decides which target format to convert the tensor to before storing it to memory. To optimally use the available range, the Transformer Engine also dynamically scales tensor data into the representable range using scaling factors computed from the tensor statistics. Therefore, every layer operates with exactly the range it requires and is accelerated in an optimal manner. The Grace Hopper Tensor Core GPU is the most important single unit in the data center.

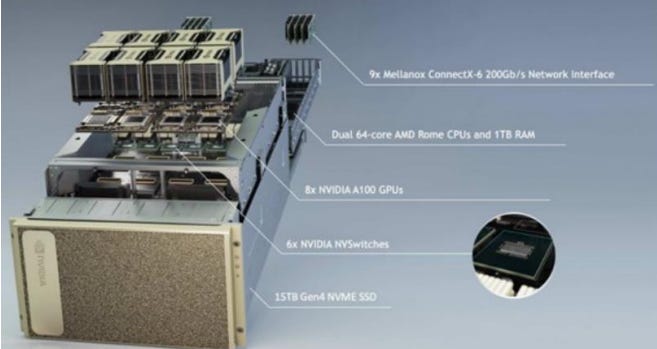

Nvidia’s DGX GH200 scales to up to 256 GPUs with interconnectivity between every Grace Hopper superchip using the NVLink Switch System. This is the absolute cutting edge. The system is designed for the singular purpose of maximizing AI throughput, providing enterprises with a highly refined, systemized, and scalable platform to help them achieve breakthroughs in natural language processing, recommender systems, data analytics, and much more. Basic GPU specifications:

NVIDIA DGX GH200 is a 24-rack cluster, with an all-Nvidia architecture, based off the Grace-Hopper chip (72-core ARM-compatible Grace CPU cluster, 512GB of LPDDR5X memory) that boasts ~144TB of memory (20TB of HBM3 and 124TB of LPDDR5X.).

NVIDIA DGX H100 is a 8U rack mounted system with dual Intel Xeons, eight H100 GPUs and ~8 NICs.

NVIDIA H100 is a GPU with ~80 GB of HBM3, 14,592 CUDA cores, 456 Tensor Cores, PCIe 5.0 x16

NVIDIA A100: 80 GB of HBM2e, 6,912 CUDA cores, 432 Tensor Cores, PCIe 4.0 x1

Other companies, such as Advanced Micro Devices, Intel and Broadcom have GPUs - but none have the advantage of Nvidia.

Power Supply Units Specialized Compute Units increase power density by 2x in the data center and Nvidia’s latest offerings require power supply units that are capable of maximum efficiency and cost-effective operation. Companies like Vicor Corporation, Monolithic Power, Lite-On and others will benefit.

Data Center Infrastructure: This includes the physical infrastructure necessary to house and cool the servers, as well as the companies that provide services for managing these data centers.

Equinix is an example of a company that provides data center services and stands to benefit from the increased demand for high-power computing capabilities.

Companies like Pegatron Corporation, Super Micro Computer, Inc. and Foxconn Industrial Internet which provide smart manufacturing solutions and server infrastructure are also likely to benefit.

Companies like Thermaltake, CoolerMaster and Vertiv (liquid cooling - current Nvidia GPU servers use air cooling, but there may be a role for liquid in the future) are essential due to the massive amount of heat generated by AI/ML and accelerated computing.

Mission-Critical companies which ensure against failure via testing and those which protect against the primary vulnerabilities of data center infrastructure (such as electrical faults) will benefit, including Bel Fuse, FormFactor, Sensata and Littelfuse.

Optical: Given the massive data requirements of AI/ML workloads, companies providing fiber optic solutions are also important as they enable high-speed data transmission over long distances.

Companies like Yangtze Optical Fibre and Cable JSC and Suzhou TFC Optical and Applied Optoelectronics which specialize in optical fiber and cable products, stand to benefit in this regard.

Companies providing packaging and manufacturing for interconnection, including process design and failure analysis, will also benefit as high-speed data transmission becomes a necessity. Examples of these include Fabrinet.

Memory: AI/ML servers outperform traditional servers (including cloud) in terms of compute power and memory capacity due to their extensive requirements for multiple (parallel) and rapid workflows. To achieve optimal performance in parallel computing using GPUs, it is crucial to have high memory bandwidth and low latency. These factors ensure sufficient bandwidth, faster processing speeds, and improved workflows for AI applications. The right combination of GPUs and memory is essential to maximize performance. Each GPU has specific memory needs. Swift and efficient memory and storage operations are critical for speedy "ingest, transform, and decide" processes, which ultimately determine the success or failure of an AI application.

SK Hynix is a key beneficiary of NVDA's AI opportunity: High-performance HBM (High Bandwidth Memory) are used in the H100 GPUs and are driving significant new demand growth for memory along with 128GB DRAM for the AI server system. DRAM is poised to benefit more than NAND. Nvidia’s DGX GH200 uses ~20TB of HBM and the rest is DRAM (LDDRX5).

Hanmi Semiconductor is a beneficiary as a developer of processes used in the hybrid bonding process and a manufacturer of indispensable bonding equipment to apply high-bandwidth memory (HBM) to high-performance GPUs which are essential for artificial intelligence (AI).

Hanmi is a key player in through-silicon via (TSV) technology and a beneficiary of increased GPU demand due to Nvidia’s CoWoS manufacturing flow. Hanmi supplies bonding equipment to customers to apply high-bandwidth memory (HBM) to high performance GPUs & is responsible for a proprietary low-temperature TSV process to connect stacked chips in 3D packaging (allowing vertical interconnections without damaging the wafer). A. The firm manufactures equipment such as through-silicon via TSV/TC bonders for the stacking of semiconductor dies.

TSV and multiple die bonding technologies enable advanced 3D/2.5D packaging with stacked memory, logic-on-logic, and multi-chip modules. Their expertise in TSV, hybrid bonding, and micro-bump interconnects allows them to support complex heterogeneous integration packaging. CoWoS utilizes TSV to allow optimized integration of high performance components but has higher cost and complexity

For die bonding, Hanmi provides services for both chip-to-chip and chip-to-wafer stacking. Their techniques include thermocompression bonding and thermosonic bonding to join dies using heat, pressure, and ultrasonic energy. Hanmi has die bonding capability for high I/O density interconnects down to sub-10μm pitch. This is important for high performance applications. They also support hybrid bonding which utilizes both metal diffusion bonds and oxide bonds to join chip or wafer surfaces.

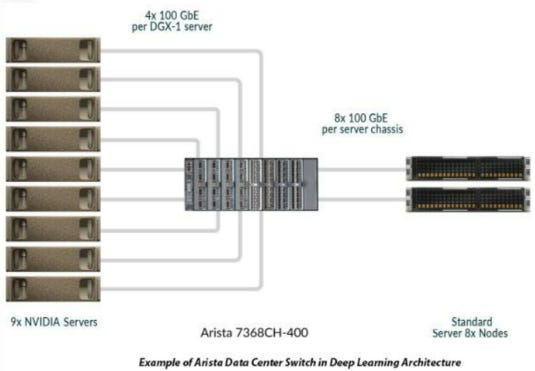

Connectivity and Interconnectivity: As AI/ML applications require huge amounts of data to be transferred quickly and efficiently, connectivity solutions are of paramount importance. This includes companies that manufacture networking equipment and provide high-speed data transfer technologies.

Connectivity is the common data fabric. Deep Learning works across neural networks with each GPU module functioning similar to a neuron – larger datasets increase PAGE 10 accuracy but also increase the demand for computation and storage performance. Multiplying the number of servers greatly increases performance, but each of them needs adequate access to the data (via a shared parallel file system, hence the name “parallel computing”.

Some solutions providers like Arista Networks, Nvidia and Cisco Systems that specialize in networking equipment will play a significant role, enabling interconnectivity to extend the highly demanding intra-GPU communication within servers to between GPU servers in a “non-blocking architecture”.

Other considerations in the area of Connectivity are:

Companies trying to achieve the goal of low power and high bandwith over extremely short distances are exploring silicon disaggregation by moving high-speed interfaces like SerDes to separate die in the form of SerDes Chiplets, if this technology solves these potential bottlenecks in a cost-effective manner it would see significant gains, one such company is Credo Technology Group Holding.

Companies providing Infiniband technology, such as Mellanox (owned by Nvidia) and Inphi (owned by Marvell) offer high-speed interconnectivity that is crucial for AI/ML applications. Nvidia recently introduced its SPECTRUM-4 switch, marrying ethernet and InfiniBand with a 400GB/s BlueField 3 SmartNIC, and also provides the H100CNX, a PCIe card that uses a ConnectX-7 SmartNIC from Mellanox. InfiniBand has many aspects of what make a good connectivity product for AI/ML/DL, including:

High Bandwidth and Low Latency: Machine learning, particularly deep learning, involves significant amounts of data and computation. Training these models requires the ability to quickly transfer large amounts of data between servers and processors. InfiniBand provides high bandwidth and low latency, which means data can be transferred quickly and without much delay, making it ideal for these tasks.

Scalability: InfiniBand is highly scalable, making it well suited for large data centers that may need to add more servers as their needs grow. It can support thousands of endpoints in a single subnet, and its architecture allows for the seamless addition of more nodes.

Reliability: InfiniBand uses advanced error checking and correction mechanisms to ensure data integrity, which is crucial when working with large volumes of data and complex computations. It's also designed to be resilient, with failover capabilities that can help maintain system uptime even in the event of hardware failures.

Support for RDMA: InfiniBand supports Remote Direct Memory Access (RDMA), which allows data to be transferred directly from the memory of one computer to the memory of another without involving the processor. This frees up processor resources and can significantly increase the speed and efficiency of data transfers, which is critical for machine learning workloads.

Interoperability and Flexibility: InfiniBand is not tied to a specific hardware or software stack, providing flexibility and interoperability among different systems. This allows data centers to choose hardware based on their specific needs without worrying about compatibility issues

Human-Computer Interfaces: As AI/ML technologies are incorporated into more products and services, companies that create interfaces between these technologies and users will also be necessary.

Companies such as Microsoft and ServiceNow, which provide software solutions that enable users to interact with AI/ML technologies, are likely to see increased demand because of:

NVDA’s latest combines 256 Grace-Hopper Superchips, connected by 36 NVLink Switches, to provide over 1 exaflops of FP8 AI performance with 144TB of unified memory, 900 GB/s of GPU-to-GPU bandwidth and 128 TB/s bisection bandwidth. Dylan Patel of SemiAnalysis (great resource) has made the salient point that while right now this is a cutting-edge piece of equipment, but considering the scale of AI/ML and resources necessary it is “tomorrow’s mass-market”.

Phase 2: The Democratized AI/ML Ecosystem Beneficiaries

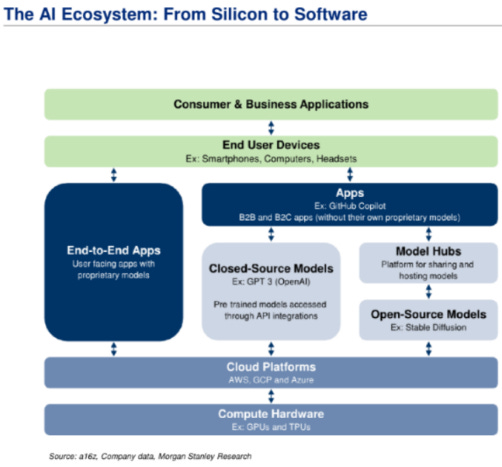

The AI and Machine Learning (ML) ecosystem from silicon to software comprises a broad spectrum of components, including hardware, software, data centers, cloud services, and applications. Let's look at each of these in more detail:

Silicon (Hardware): This includes processors designed specifically to accelerate AI workloads, such as GPUs, TPUs, and specialized AI chips. These chips are critical to handling the vast computational demands of AI/ML models in both training and inference.

Major players include NVIDIA and Advanced Micro Devices (AMD), which provide powerful GPUs used in both training and inference of AI models. Inference is the more costly of the two, and is key to refine, optimize and maximize the output of models.

Intel with its range of CPUs and specialized AI chips like the Nervana series.

Google has developed its own Tensor Processing Units (TPUs) for use in its data centers.

Apple and Qualcomm develop chips for mobile devices that include AI acceleration capabilities.

Data Centers and Cloud Providers: These companies provide the infrastructure necessary to store, process, and transmit the massive amounts of data used in AI/ML workloads. They also often provide cloud-based platforms for developing and deploying AI models.

Amazon Web Services (AWS), Microsoft Azure, and Google Cloud are the leading cloud providers that offer a range of AI/ML services. Nvidia has recently announced a cloud service specifically suited to AI use.

Companies like Equinix provide data center services, but only companies with significant Tier 3 data center exposure will benefit without having to significantly increase CapEx (Digital Realty does not have facilities as advanced as EQIX).

AI/ML Frameworks and Platforms: These software libraries and platforms simplify the development of AI/ML models by providing pre-defined functions, algorithms, and model structures.

Google's TensorFlow, Facebook's PyTorch, and Microsoft's Cognitive Toolkit (CNTK) are among the most popular AI/ML frameworks.

Cloud providers like AWS, Azure, and Google Cloud also offer platform services designed to support AI/ML development.

Model Hubs and AI Marketplaces: These are platforms that provide pre-trained models and algorithms that developers can use as a starting point for their own applications.

Google's AI Hub, Hugging Face Model Hub, and the AWS Marketplace for Machine Learning are good examples.

Business Applications and Implementations: These include software and services that leverage AI to provide improved functionality for business tasks, such as analytics, automation, customer relationship management (CRM), and more.

AI/ML Solutions, Digital Transformation and Talent/Expertise are the major offerings and resources at play in the application area of phase two.

IBM Watson, Salesforce Einstein, and Microsoft Dynamics 365 AI are platforms that incorporate AI into a wide range of business applications.

Companies like Oracle, Adobe, Autodesk, Palo Alto Networks, Globant and VMWare are likely to see significant benefit from the integration or provision of AI/ML solutions.

Government Rollout: Defense contractors may benefit from implementing AI/ML/Deep Learning for weapon targeting systems, scenario analysis and threat assessment. Palantir currently leads this space. Additionally, government demand to revamp their existing data centers with confidential information will likely benefit CDW with its current supply contracts (and, in turn, the companies CDW sources from like NVDA etc.).

Consumer Applications: These are applications aimed at everyday consumers that use AI in some capacity, such as voice assistants, recommendation systems, and personalized marketing.

Companies like Apple, Google, Amazon, and Microsoft offer AI-infused products like Siri, Google Assistant, Alexa, and Cortana, respectively. Facebook (now Meta Platforms) Google and Amazon and similar will use AI for consumer applications.

The AI/ML ecosystem is rapidly evolving and expanding, with new players entering the field and existing ones continually developing new products and services. Additionally, novel solutions in AI/ML threaten existing oligopolies and current paradigms in business services, which is an essential consideration for a basket that may not be altered once constructed. Therefore, the emphasis on Phase Two will be less focused on who use AI for their own purposes and focus more significantly on the companies which allow others to implement AI for their businesses. Companies will win in phase two that do not currently exist or that are just being funded, but it will not be as significantly unpredictable as phase 3.

The only thing we can say with certainty at this phase is that AI/ML use will proliferate as it already has done and generate novel solutions and products, anticipating how it does so or what it looks like is – in my opinion – a fool’s errand for how rife it is with the potential to fall flat. Do giant legacy companies come up against a flood of innovative upstarts like we saw in the dot com bubble? Is there a new golden era of computing in which they thrive? One can take an attempt, but it is wracked with uncertainty. However, regardless of what happens, it will require the rails upon which the technology will be built and implemented. Additionally, there is a sort of “corporate FOMO”. Even if the monetization of AI for consumer or business applications falls short of expectations, we can be relatively certain that if a large company has a competitor who is implementing AI then they will be certain to do so too. By focusing on the companies which enable this never ending corporate technological chase, we position ourselves most likely to see tangible benefits. Thus, we focus on the Implementers of phase two.

Phase 3: Integration and Specialization Beneficiaries

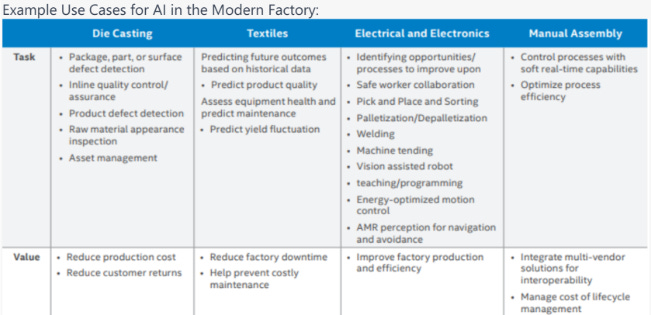

Phase Three, as described earlier, involves the integration of AI/ML into industries, applications, and systems across the spectrum. This phase is characterized by the widespread application of AI/ML, leading to transformative changes in many industries and even in society at large. While it is the sexiest, most attractive and transformative phase, it is also the most speculative. Positions which benefit more from phase three than any other phase are bound to carry the most execution risk and run the potential of being disrupted before benefit can be recognized.

Because of this, we focus on trends which are readily observable to have a demonstrable benefit from the proliferation of AI without extrapolation (companies which are currently using AI and will see clear benefits at the earliest onset). Companies facing significant or existential risk from AI, such as many SaaS companies and companies who’s business models rely on tools that can be easily and quickly phased out by customers, are excluded by default. Companies who have access to a wealth of data which will see its value increase significantly as AI makes it more widely usable are the primary focus. Regardless, the companies in this phase are the most speculative and path dependent.

1. Schrodinger: Schrodinger is a leading provider of advanced molecular simulations and enterprise software solutions and services. Its platforms are used extensively in materials science and life sciences for drug discovery. In Phase Three, the growth of AI/ML could enhance Schrodinger's drug discovery platform, enabling more accurate simulations and predictions.

2. Symbiotic Inc. is a provider of automation solutions for warehouses and distribution centers. With AI/ML technologies, automated warehouse systems can become more efficient and intelligent. This includes automated picking and packing systems, sorting systems, and even autonomous vehicles for transporting goods within the warehouse. AI-powered systems can handle complex tasks, adapt to changes, and learn over time to improve their performance. AI is crucial in the development and cost of machine vision and sensor systems, and data collected by SYM will become only increasingly valuable in phase three.

3. Salesforce: Salesforce is a global leader in customer relationship management (CRM) software. They have been integrating AI into their services with their AI platform, Einstein. In Phase Three, AI could become even more integral to Salesforce's offerings, enabling more personalized customer experiences, better sales predictions, and more efficient business processes.

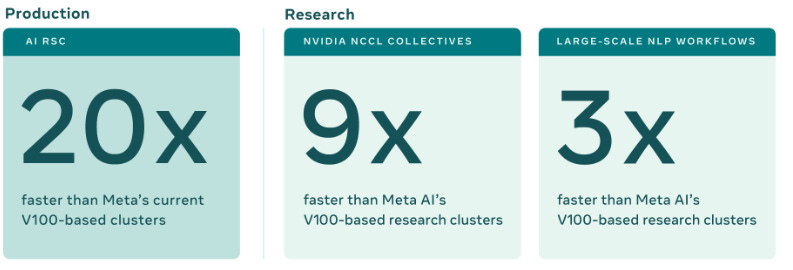

4. Meta Platforms: Meta Platforms, with its transition into a company focusing on building the "metaverse," could be a significant player in Phase Three. By leveraging AI/ML, Meta Platforms can create more immersive, personalized experiences in virtual reality, augmented reality, and social media. AI/ML can also help the company better understand user behavior, optimize advertising, and maintain community standards. Meta’s “AI Research SuperCluster” (RSC) is one of the fastest supercomputers in the world, a huge starting advantage in the phase three space:

Source: Meta Platforms (Dated Jan 2022)

Using 2,000 NVIDIA DGX A100 systems, for a total of 16,000 NVIDIA A100 Tensor Core GPUs, connected via an NVIDIA Quantum InfiniBand 16 Tb/s fabric network, Meta Platforms has done a significant amount of work in Machine Translation. This could translate to an advantage in the AI/ML space globally, because much of the development is currently being done with a focus on the English language.

5. Rockwell Automation: Rockwell Automation provides industrial automation and digital transformation solutions. As manufacturing and other industrial sectors increasingly adopt AI/ML in Phase Three, Rockwell could provide AI-powered automation solutions that significantly improve efficiency, safety, and productivity. This could include predictive maintenance, quality control, process optimization, and other Industry 4.0 applications.

6. Palo Alto Networks: Palo Alto Networks’ acquisition of Gamma.AI, an AI-driven chat security company, could provide Palo Alto Networks with enhanced capabilities in securing the AI/ML platforms that become ubiquitous. This move is particularly significant in the wake of an increasing trend towards remote work and the reliance on chat and collaboration tools. Gamma.AI's technology could allow Palo Alto to identify malicious content and insider threats across these platforms in real-time. AI helps to analyze vast amounts of data for unusual patterns or anomalies in real-time. This capability can aid in detecting and responding to cyber threats more quickly and accurately. Especially during the transition to the age of accelerated computing, new threats will emerge and companies will have to fight them on a dual front – as public facing LLMs must remain resilient to attack and legacy cloud continues to face increasingly sophisticated risks.

These companies illustrate the broad range of applications for AI/ML in Phase Three, spanning fields from drug discovery to CRM, social media and virtual reality, and industrial automation. It is important to note that the phase 3 basket does not focus on those companies which will win most significantly, just those which are most likely to gain due to the third phase in general, regardless of which outcome(s) are most likely.

Potential Phase Three Scenarios:

Scenario 1: The "Hyper-Personalization" Future

In this scenario, AI/ML is leveraged primarily to create hyper-personalized experiences in various domains, from consumer goods to digital platforms and healthcare.

· Salesforce could be a major beneficiary, as its CRM platform could use AI/ML to provide businesses with unprecedented levels of customer insight, driving personalized marketing and customer service strategies.

· Adobe and Autodesk could benefit by providing AI-powered tools for creating personalized digital content.

· Globant could provide bespoke software solutions that integrate AI/ML for personalized user experiences.

· In the healthcare sector, Schrodinger and Abcellera might excel by using AI to personalize drug treatments based on a patient's genetic profile.

· With the ever increasing inclusion of technology in our lives, including something as intrusive as a potential “agent” (or AI personal assistant), Palo Alto Networks’ technology becomes increasingly important.

Scenario 2: The "Automated World" Future

In this scenario, AI/ML is predominantly used to automate processes across various sectors, from manufacturing to logistics and services.

· Symbiotic Inc. could emerge as a major player by providing AI-powered automation solutions for warehouses and distribution centers.

· Rockwell Automation might excel by providing AI-driven automation solutions to the manufacturing industry.

· ServiceNow and Microsoft could stand out by automating IT services and business processes using AI.

· Amazon, or whoever is able to create the most successful “agent” (that is, a language model capable of carrying out tasks without being prompted such as shopping, setting up meetings and various assistant tasks), would benefit as the primary conduit for ecommerce through the AI lens.

In the semiconductor space, ASML, Lam Research, Cadence and Synopsis could benefit from the increased demand for advanced chips to power automation technologies.

Scenario 3: The "Virtual Reality" Future

In this scenario, AI/ML is used primarily to create immersive virtual reality (VR) experiences, also known as the metaverse, which transforms the way people interact with digital platforms.

· Meta Platforms could be a significant beneficiary, with its focus on building the metaverse.

· Companies like Nvidia and Advanced Micro Devices could benefit enormously as their GPUs become essential for rendering VR experiences.

· Microsoft and Apple could also be a major player with their mixed reality platform, HoloLens, and augmented reality platform respectively.

· Autodesk and PTC Inc. could leverage AI to create immersive 3D models for use in VR environments.

Each of these scenarios reflects a different direction that the evolution of AI/ML technologies could take, and the beneficiaries in each case would depend on which applications of AI become most prevalent. In all three scenarios, AI/ML capable data center use increases exponentially. In the semiconductor space, phase 3 is most likely associated with FPGAs, smartNICs, DPUs and ASICs specific to AI/ML.

GLOBAL AI BENEFICIARIES: TICKER LIST & CHARTS

Please view using either the pdf linked at the top of the document or the spreadsheets, this may be hard to view on mobile or tablet:

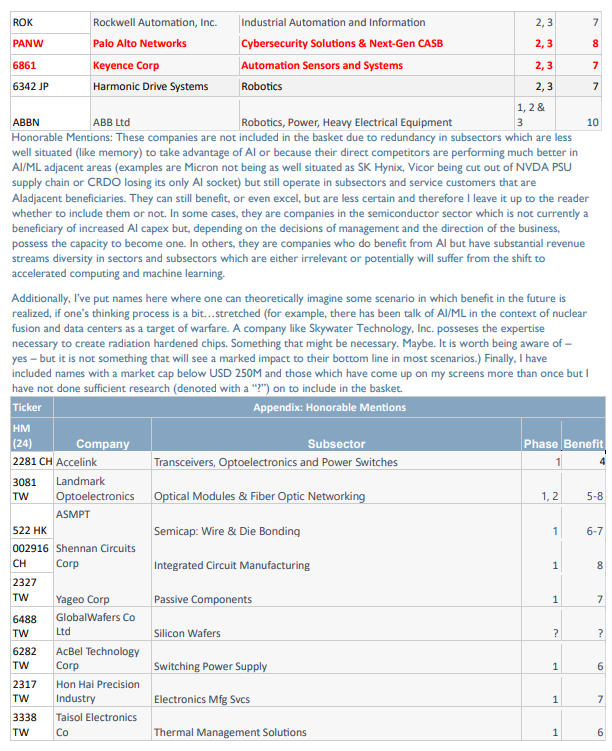

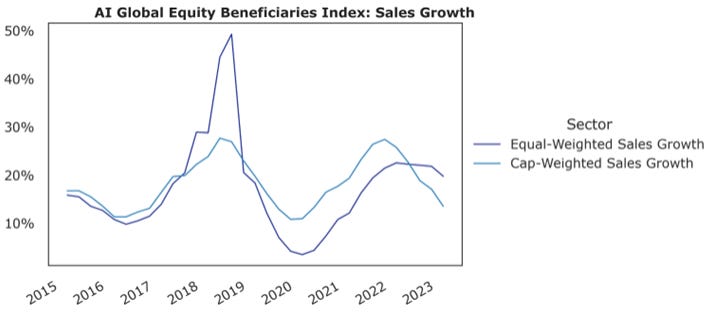

Charts below assume a semi annual rebalancing and equal weighting with all currency exposure hedged to USD.

If you didn't want to worry about directionality one could get long the basket and short SMH - as a ratio or in full.

This is advancement in reasoning AI, robotics will not be a big beneficiary. But robotic automation could